Human visual inspection has a ceiling, and most production lines have already hit it. Machine vision manufacturing systems use high-speed cameras and trained models to detect surface defects, misalignments, and packaging errors at a rate and consistency no human team can match. For entrepreneurs building a comprehensive approach to AI in industrial automation: what actually works in 2026, quality control is often the fastest win on the floor. This page covers how machine vision manufacturing works, what it costs, and which platforms are worth your attention.

There is a version of quality control that most production operations are still running: a person standing at the end of a line, checking products by eye, flagging defects when they notice them. It is a system built on human attention — which means it is built on something that degrades over a shift, varies between inspectors, and scales poorly as output increases.

Machine vision manufacturing systems do not get tired. They do not have bad days. They do not miss the third defect in a row because the first two broke their concentration. And in 2026, they are no longer reserved for large automotive or semiconductor manufacturers with nine-figure capital budgets. They are accessible, modular, and increasingly fast to deploy across a wide range of production environments.

If your operation still relies primarily on manual inspection, the question is not whether machine vision manufacturing makes sense. The question is how much the current approach is already costing you.

What machine vision manufacturing actually means

Machine vision manufacturing is the use of camera systems, lighting hardware, and image analysis software to automatically inspect products, components, or materials during or after production. The system captures images or video of items moving through a production line and runs them through detection models trained to identify specific defect types, dimensional deviations, or assembly errors.

The core components of any machine vision manufacturing system are:

Imaging hardware: Industrial cameras — area scan for stationary inspection, line scan for continuous conveyor inspection — paired with controlled lighting designed to reveal specific surface characteristics. Lighting is more critical than most people expect. The right lighting angle can make a hairline crack clearly visible; the wrong one makes it invisible to both cameras and humans.

Image processing software: The layer that analyzes captured images against trained models. Modern platforms use deep learning, a type of pattern recognition that improves as it processes more examples, which means detection accuracy increases over time as the system sees more of your actual production output.

Integration layer: The connection between the vision system and your production line controls. When a defect is detected, the system needs to trigger a physical response — activating a rejection mechanism, halting a line, or flagging an item for secondary review — which requires integration with your existing PLC or conveyor control system.

Where manual inspection consistently breaks down

The failure modes of manual inspection follow predictable patterns, and if you run a production operation, you have almost certainly seen them.

Fatigue-related miss rate increase: Studies across multiple manufacturing sectors document that human inspection accuracy drops measurably after the first two hours of a shift. By hour six or seven, miss rates for subtle defects can be two to three times higher than at the start of the shift. The defects do not change — the inspector’s capacity to catch them does.

Inter-inspector variability: Two trained inspectors looking at the same borderline defect will not always make the same call. This inconsistency creates quality disputes with customers, rework decisions that vary by shift, and reject rates that fluctuate without a clear operational explanation.

Speed ceiling: As production throughput increases, the number of items a human inspector can reliably examine per minute stays fixed. Machine vision manufacturing systems scale with the line — faster throughput does not reduce detection accuracy.

Documentation gaps: Manual inspection relies on paper logs or manual data entry, which creates delays, errors, and gaps in your quality record. Machine vision systems capture every inspection result automatically, creating a timestamped, searchable record of every item that passed through the line.

For entrepreneurs who are building a full automation strategy,industrial AI applications: the uses you can’t ignoremaps how machine vision fits alongside predictive maintenance, demand forecasting, and energy optimization as part of a coherent investment sequence.

Industries where machine vision manufacturing is producing the clearest results

Machine vision manufacturing is not sector-specific. The underlying technology applies wherever there is a visual inspection requirement and a product moving at volume. The sectors with the most mature deployments and the most documented ROI data are:

Electronics and semiconductor manufacturing: Board inspection, solder joint verification, component placement accuracy, and wafer surface defect detection. The tolerance requirements in this sector pushed machine vision technology forward faster than almost any other industry, and the tooling reflects that maturity.

Automotive parts manufacturing: Surface finish inspection on machined components, weld quality verification, seal integrity checks, and assembly completeness confirmation. High volume combined with strict OEM quality requirements makes manual inspection economically unsustainable at scale.

Food and beverage processing: Label placement, fill level verification, cap and seal integrity, foreign object detection, and product dimensional consistency. Regulatory compliance requirements in food production add a documentation dimension that machine vision systems handle automatically.

Pharmaceutical manufacturing: Tablet surface inspection, blister pack completeness, label accuracy, and container integrity. The consequences of a defect reaching a patient make this one of the sectors with the strongest compliance-driven adoption of machine vision manufacturing.

Packaging and printing: Color consistency, print registration, barcode readability, and structural integrity of packaging before filling or sealing. Downstream costs from unreadable barcodes or misaligned labels at retail are disproportionate to the defect itself.

Platforms leading the machine vision manufacturing market in 2026

The market has several well-established players and a growing set of newer entrants built around deep learning rather than traditional rule-based vision. The distinction matters because deep learning systems handle natural variation in products, lighting conditions, and defect types far better than older rule-based systems, which require manual programming of every defect category.

Cognex is the longest-established name in machine vision manufacturing. Its In-Sight series offers a range of camera-integrated systems with built-in vision tools and a no-code configuration interface that makes initial setup accessible without a machine vision engineer on staff. Strong documentation, wide third-party integration support, and a large installer network make it a reliable choice for first deployments.

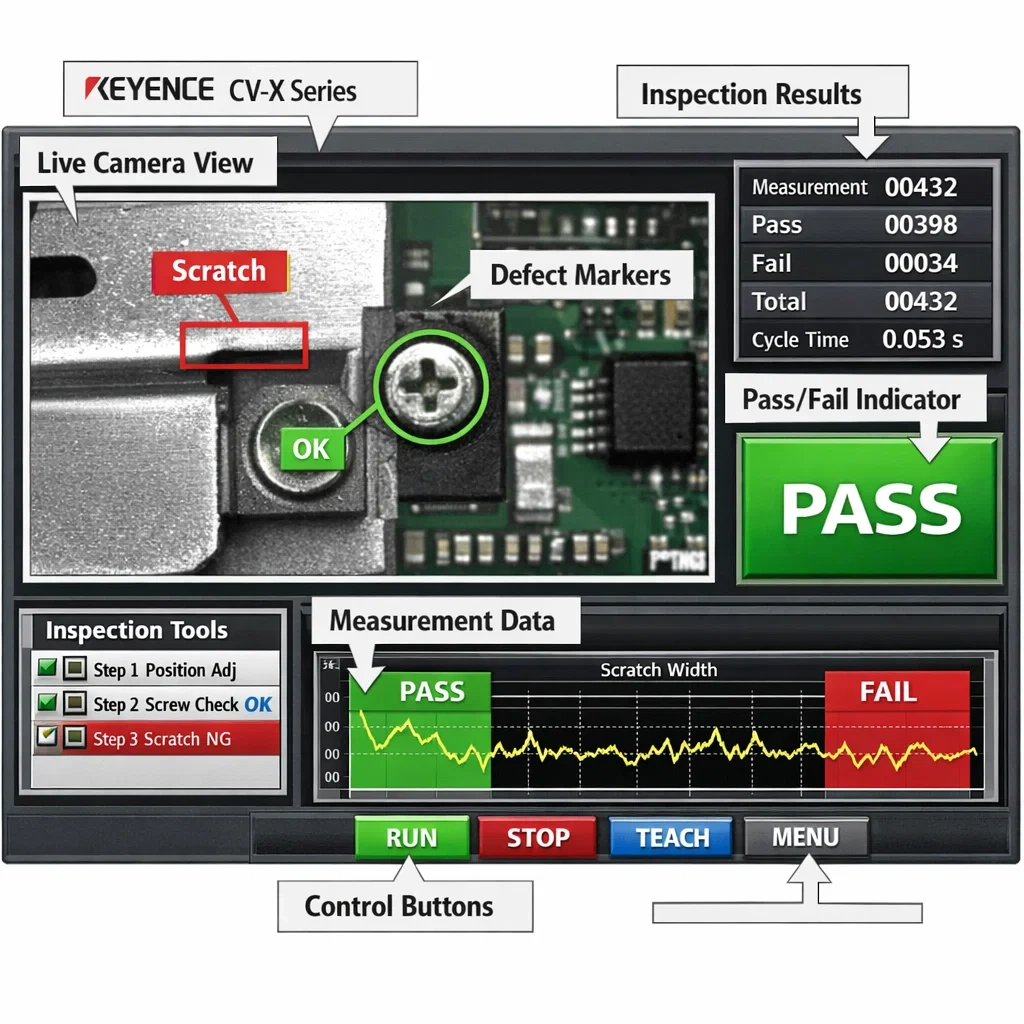

Keyence competes directly with Cognex on hardware quality and offers some of the most precise imaging systems in the mid-market segment. Its CV-X and XG-X series are particularly strong in high-speed inspection applications where image capture timing is critical.

Cognex ViDi (now integrated into the main Cognex platform) and Landing AI represent the deep learning end of the spectrum — systems designed specifically to handle complex, variable defects that rule-based vision cannot reliably detect. Landing AI’s visual inspection platform is particularly well-suited for manufacturers dealing with surface defects that are difficult to define with explicit rules.

Pickit and Mech-Mind are strong options for operations that need vision guidance for robotic picking and placement in addition to inspection — combining bin-picking guidance with quality verification in a single system.

What machine vision manufacturing costs — and what drives that number

Cost ranges in machine vision manufacturing vary enough that any single number is misleading without context. The variables that drive cost are:

Inspection complexity: A single-surface pass/fail check on a uniform product costs far less to deploy than a multi-angle inspection with dimensional measurement and multiple defect categories.

Line speed: Higher throughput requires faster cameras, more precise triggering, and more processing power — all of which increase hardware cost.

Integration requirements: A standalone inspection station with a simple reject mechanism is significantly cheaper to integrate than a system that feeds real-time data to your MES, triggers upstream process adjustments, and logs results to a compliance database.

Number of inspection points: Some operations need one camera at end-of-line. Others need multiple inspection stations across the production process. Each point adds hardware, software licensing, and integration cost.

A focused single-point inspection system for a mid-sized manufacturer typically falls in the $15,000 to $60,000 range for hardware and initial software configuration. Enterprise-scale multi-point systems with deep learning components and full MES integration can reach $200,000 or more. The ROI calculation, however, almost always favors deployment when you factor in the cost of defects currently reaching customers and the labor cost of the inspection process being replaced.

The broader context for sequencing this investment alongside other automation decisions is covered in AI in industrial automation: what actually works in 2026.

How to prepare your operation for a machine vision deployment

The deployments that run smoothly share a common preparation pattern. Before a camera is mounted or a software license is purchased, the following groundwork makes the difference between a system that works from day one and one that spends six months in calibration.

Define your defect library: Document every defect type you currently inspect for, with physical samples or high-quality images of each. This becomes the training dataset for your detection models. The more complete and representative your defect library, the faster the system reaches reliable detection accuracy.

Assess your lighting environment: Machine vision systems are sensitive to ambient light variation. Evaluate whether your inspection area has consistent, controllable lighting or whether environmental factors — skylights, shift-based lighting changes, reflective surfaces — will require engineered lighting solutions.

Map your rejection workflow: Know exactly what happens when a defect is detected before the system goes live. Does the item get diverted automatically? Flagged for manual secondary review? Halted on the line? The physical and procedural response to a detection event needs to be defined and tested before production use.

Involve your quality team early: The people who currently perform manual inspection understand your defect types, your borderline cases, and your customer quality agreements better than anyone. Their input during system configuration directly improves detection accuracy and reduces the calibration period.

Conclusion

Manual inspection is not a sustainable quality strategy for any operation running at meaningful volume. The fatigue factors, the consistency gaps, the speed ceiling, and the documentation burden all compound into a quality system that becomes more expensive and less reliable as your business grows. Machine vision manufacturing addresses all of those problems simultaneously — and the deployment path in 2026 is more accessible than it has ever been.

The operations that move on this now are not just improving their defect rates. They are building a quality infrastructure that scales with their growth without adding headcount, and that produces the documentation trail that increasingly demanding customers and regulators expect to see.